S3 is one of those services where costs grow invisibly. You add a bucket here, enable versioning there, and six months later you're paying for terabytes of data you forgot existed — old logs, stale backups, superseded deployment artifacts, and every version of every file since day one.

The fix isn't deleting things indiscriminately. It's telling S3 what to do with data automatically, based on how old it is and how often you actually need it. That's what lifecycle policies are for.

This post covers the full toolkit: storage classes, lifecycle rules, Intelligent-Tiering, versioning cleanup, and the multipart upload leak nobody talks about.

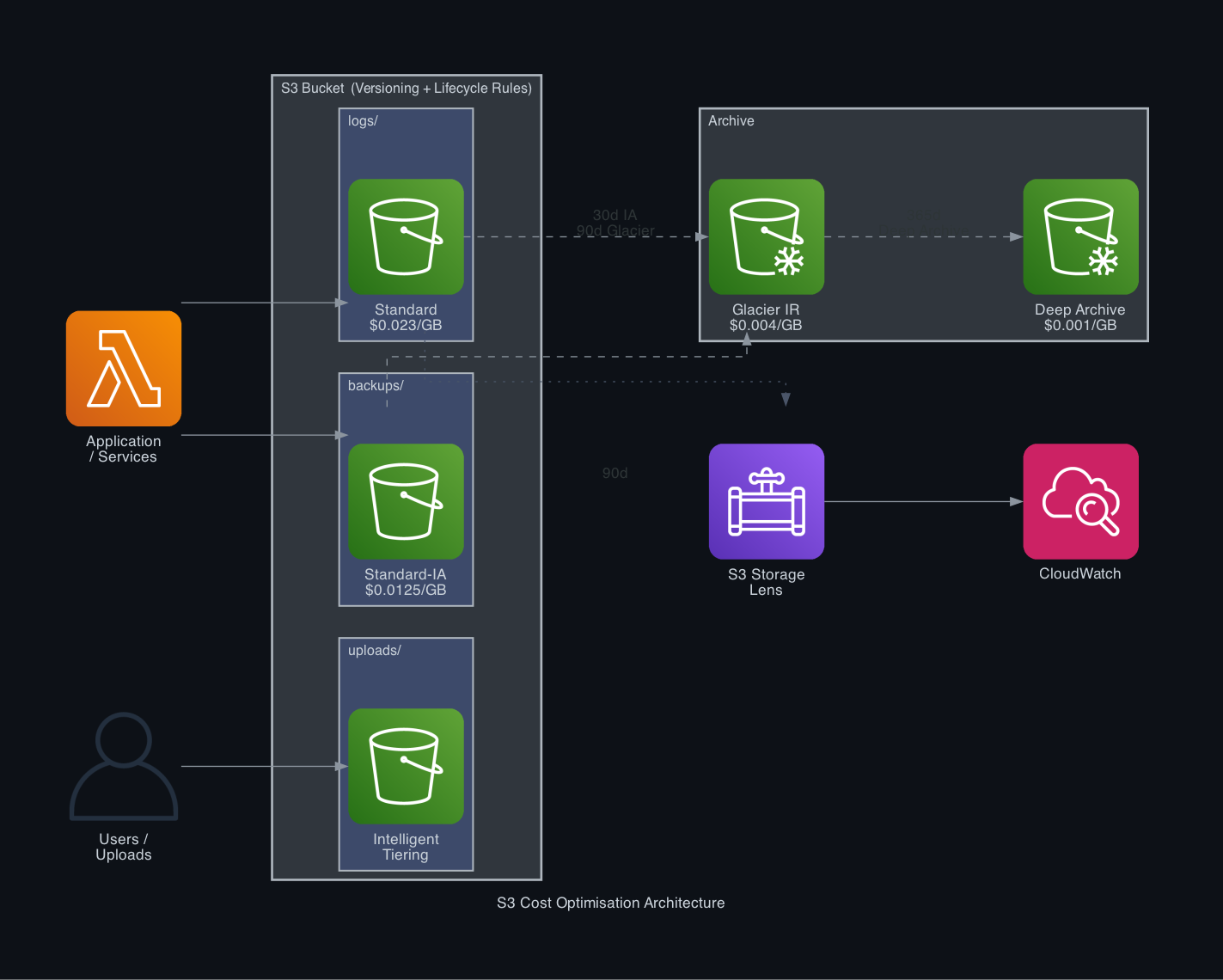

Why S3 Costs Sneak Up on You

S3 Standard costs roughly $0.023 per GB/month. That sounds trivial until you're storing 10 TB — at which point you're paying ~$230/month just for storage, before requests and data transfer.

The hidden multiplier is versioning. When versioning is enabled (which it should be for anything important), every PUT creates a new version. Delete a 1 GB file? S3 doesn't free that space — it adds a delete marker and keeps all previous versions. A bucket with versioning and no lifecycle rules is a slow, expensive leak.

The other hidden cost is incomplete multipart uploads. Large files uploaded in parts leave incomplete multipart uploads sitting in your bucket if the upload fails or is abandoned. They don't show up in object listings and they don't count toward your bucket size in the console — but you're billed for them.

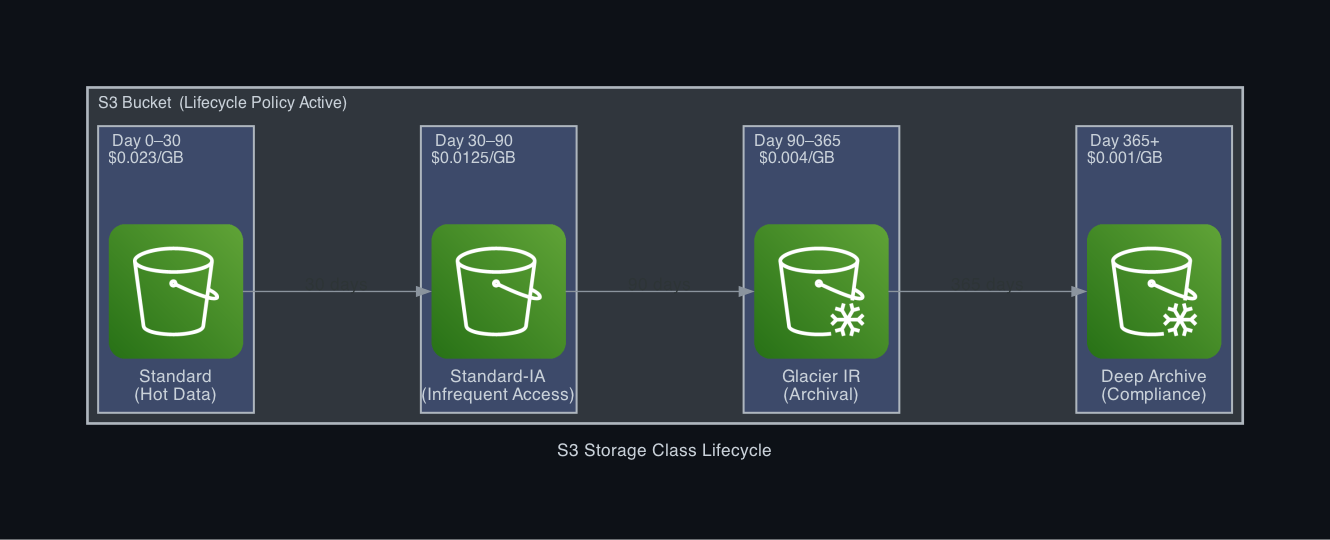

S3 Storage Classes — The Cost Ladder

Before you can write lifecycle rules, you need to understand what you're transitioning between.

| Storage Class | Use Case | Cost (per GB/month) | Retrieval |

| S3 Standard | Frequently accessed data | ~$0.023 | Instant |

| S3 Standard-IA | Infrequent access, kept 30+ days | ~$0.0125 | Instant (+ retrieval fee) |

| S3 One Zone-IA | Infrequent, non-critical, one AZ | ~$0.01 | Instant (+ retrieval fee) |

| S3 Glacier Instant | Archives accessed occasionally | ~$0.004 | Instant (milliseconds) |

| S3 Glacier Flexible | Archives rarely accessed | ~$0.0036 | Minutes to hours |

| S3 Glacier Deep Archive | Long-term compliance archives | ~$0.00099 | 12–48 hours |

The key transitions for most systems:

- Day 0–30: Standard (hot data, frequently accessed)

- Day 30–90: Standard-IA (still accessible instantly, lower storage cost)

- Day 90–365: Glacier Instant or Flexible (rarely touched, much cheaper)

- Day 365+: Glacier Deep Archive (compliance archives, near-zero cost)

Moving 1 TB from Standard to Glacier Deep Archive drops the storage cost from $23.55/month to $1.02/month. For a year of retained logs, that's $270 saved on a single bucket.

Writing Your First Lifecycle Rule

Lifecycle rules live on the bucket. Each rule has a filter (which objects it applies to), a set of transition actions (move to cheaper storage), and optional expiration actions (delete after N days).

Via the AWS CLI — save this as lifecycle.json:

{

"Rules": [

{

"ID": "archive-and-expire-logs",

"Status": "Enabled",

"Filter": { "Prefix": "logs/" },

"Transitions": [

{ "Days": 30, "StorageClass": "STANDARD_IA" },

{ "Days": 90, "StorageClass": "GLACIER_IR" },

{ "Days": 365, "StorageClass": "DEEP_ARCHIVE" }

],

"Expiration": { "Days": 730 }

}

]

}aws s3api put-bucket-lifecycle-configuration \

--bucket my-app-logs \

--lifecycle-configuration file://lifecycle.jsonThis single rule moves log files through the entire cost ladder and deletes them after 2 years. No manual intervention, ever.

Versioning Cleanup — Where Most Savings Hide

Versioning protection is worth having. The lifecycle rules to manage it are not optional once you enable it.

Without cleanup rules, a versioned bucket accumulates:

- Noncurrent versions — old versions of files that have been overwritten

- Delete markers — tombstone objects left behind when you "delete" a versioned file

Both are billed. Neither is useful after a certain age.

Add these rules to every versioned bucket:

{

"Rules": [

{

"ID": "cleanup-old-versions",

"Status": "Enabled",

"Filter": {},

"NoncurrentVersionTransitions": [

{ "NoncurrentDays": 30, "StorageClass": "STANDARD_IA" },

{ "NoncurrentDays": 90, "StorageClass": "GLACIER_IR" }

],

"NoncurrentVersionExpiration": {

"NoncurrentDays": 180,

"NewerNoncurrentVersions": 3

},

"Expiration": {

"ExpiredObjectDeleteMarker": true

}

}

]

}Key settings here:

NoncurrentDays: 180— delete noncurrent versions after 6 monthsNewerNoncurrentVersions: 3— always keep the 3 most recent versions regardless of age (safety net)ExpiredObjectDeleteMarker: true— automatically cleans up orphaned delete markers

The Multipart Upload Leak

Every production system with file uploads or large S3 operations has this problem. A multipart upload starts, the client crashes or the network drops, and the partial upload sits in the bucket forever — invisible, billed silently.

Fix it with a one-line lifecycle rule:

{

"Rules": [

{

"ID": "abort-incomplete-multipart",

"Status": "Enabled",

"Filter": {},

"AbortIncompleteMultipartUpload": { "DaysAfterInitiation": 7 }

}

]

}To see how much you're currently wasting, run:

aws s3api list-multipart-uploads --bucket my-bucketIf that returns anything, it's costing you money right now.

S3 Intelligent-Tiering — When Access Patterns Are Unpredictable

Lifecycle rules work when you know how your access pattern changes over time. But what about buckets where access is genuinely unpredictable — a media archive where some old files get viral traffic, or a dataset that gets queried unpredictably?

S3 Intelligent-Tiering monitors access patterns per object and automatically moves data between tiers:

- Accessed: stays in Frequent Access (Standard pricing)

- Not accessed for 30 days: moves to Infrequent Access tier

- Not accessed for 90 days: moves to Archive Instant Access tier

- Not accessed for 180 days: moves to Archive tier (optional, you opt in)

There's a small monitoring fee ($0.0025 per 1,000 objects/month) but no retrieval fees and no minimum duration charges for the active tiers.

Enable it via lifecycle transition to INTELLIGENT_TIERING:

{

"Rules": [

{

"ID": "intelligent-tiering-after-30-days",

"Status": "Enabled",

"Filter": { "Prefix": "media/" },

"Transitions": [{ "Days": 30, "StorageClass": "INTELLIGENT_TIERING" }]

}

]

}For objects larger than 128 KB in buckets with irregular access patterns, Intelligent-Tiering nearly always beats a manually tuned lifecycle rule.

S3 Storage Lens — Find the Waste First

Before writing rules, you should know what's actually in your buckets. S3 Storage Lens is a free account-level analytics dashboard that shows:

- Total storage by bucket, region, storage class

- Percentage of data in each storage class

- Object count, average object size

- Incomplete multipart upload bytes

- Noncurrent version bytes

Enable it from the S3 console under Storage Lens → Dashboards → Create. The default dashboard is free and shows 14 days of metrics. The advanced tier ($0.20 per million objects) adds activity metrics like request counts per bucket.

Run this to get a quick cost breakdown per storage class across all buckets:

aws s3api list-buckets --query 'Buckets[].Name' --output text | \

tr '\t' '\n' | \

xargs -I{} aws s3api get-bucket-location --bucket {} \

--query '["{}", LocationConstraint]' --output textFor a detailed per-bucket size report:

aws cloudwatch get-metric-statistics \

--namespace AWS/S3 \

--metric-name BucketSizeBytes \

--dimensions Name=BucketName,Value=my-bucket Name=StorageType,Value=StandardStorage \

--start-time 2026-03-01T00:00:00Z \

--end-time 2026-03-20T00:00:00Z \

--period 86400 \

--statistics AverageA Practical Rule Set for a Production Application

Here's a complete lifecycle configuration covering logs, application data, versioning cleanup, and multipart cleanup in a single policy:

{

"Rules": [

{

"ID": "logs-tiering",

"Status": "Enabled",

"Filter": { "Prefix": "logs/" },

"Transitions": [

{ "Days": 30, "StorageClass": "STANDARD_IA" },

{ "Days": 90, "StorageClass": "GLACIER_IR" },

{ "Days": 365, "StorageClass": "DEEP_ARCHIVE" }

],

"Expiration": { "Days": 730 }

},

{

"ID": "app-data-tiering",

"Status": "Enabled",

"Filter": { "Prefix": "uploads/" },

"Transitions": [{ "Days": 90, "StorageClass": "INTELLIGENT_TIERING" }]

},

{

"ID": "version-cleanup",

"Status": "Enabled",

"Filter": {},

"NoncurrentVersionTransitions": [

{ "NoncurrentDays": 30, "StorageClass": "STANDARD_IA" }

],

"NoncurrentVersionExpiration": {

"NoncurrentDays": 90,

"NewerNoncurrentVersions": 5

},

"Expiration": { "ExpiredObjectDeleteMarker": true }

},

{

"ID": "abort-multipart",

"Status": "Enabled",

"Filter": {},

"AbortIncompleteMultipartUpload": { "DaysAfterInitiation": 7 }

}

]

}Apply it:

aws s3api put-bucket-lifecycle-configuration \

--bucket my-production-bucket \

--lifecycle-configuration file://production-lifecycle.jsonWhat to Expect in Savings

Results vary by workload, but here's a realistic breakdown for a team storing 2 TB of logs and 500 GB of user uploads:

| Before | After | Monthly Saving |

| 2 TB logs in Standard | Tiered across IA / Glacier | ~$37 → ~$6 |

| 500 GB uploads in Standard | Intelligent-Tiering | ~$11.75 → ~$6–8 |

| Uncleaned noncurrent versions (est. 800 GB) | Cleaned up | ~$18.40 → $0 |

| Abandoned multipart uploads (est. 50 GB) | Cleaned up | ~$1.15 → $0 |

| **Total** | **~$68/month → ~$14/month** |

That's roughly 80% reduction on S3 spend — with zero change to your application code and zero impact on data availability.

Summary

S3 lifecycle rules are the lowest-effort, highest-ROI change you can make to an AWS bill. The work is:

- Audit — use Storage Lens or CloudWatch to see what's in each bucket and what storage class it's in

- Define retention — decide how long data needs to be instantly accessible vs. archivable vs. deletable

- Write the rules — transitions + noncurrent version expiration + multipart cleanup

- Apply and monitor — costs drop over 30–90 days as existing objects transition; CloudWatch metrics confirm it's working

The one rule to apply to every bucket, today, with no analysis required:

{ "AbortIncompleteMultipartUpload": { "DaysAfterInitiation": 7 } }Everything else follows once you know what you're storing.