A three-tier architecture separates your application into three independent layers: presentation, application, and data. Each tier can scale, fail, and be secured independently — which is exactly what production systems need.

This guide walks through building a production-grade setup using CloudFront + ALB (presentation), ECS Fargate (application), and Aurora PostgreSQL (data) — all running inside a multi-AZ VPC.

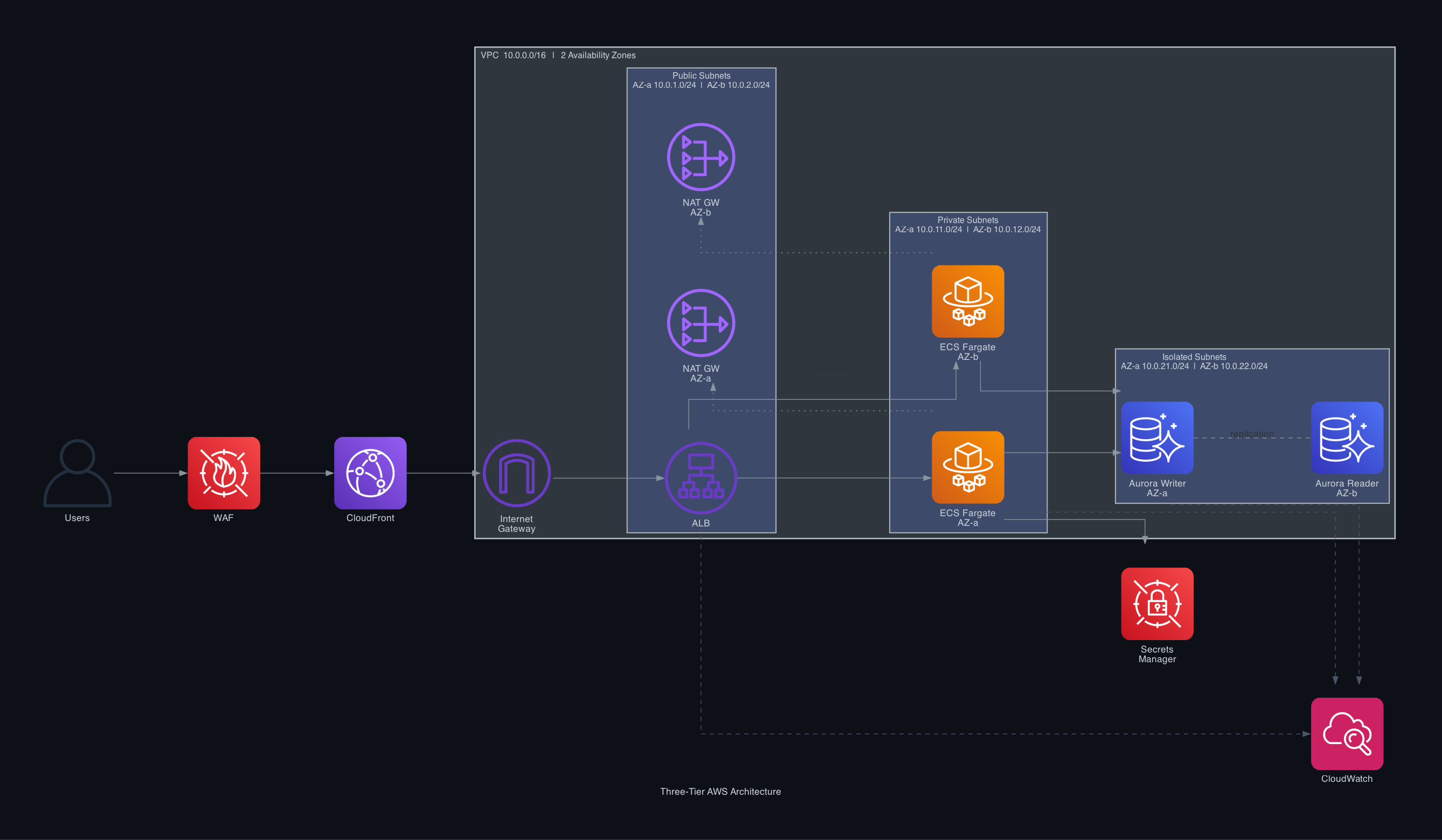

Architecture Overview

The traffic flow from a user request to the database looks like this:

- User hits CloudFront — cached at the nearest edge location

- CloudFront passes requests through AWS WAF for threat filtering

- Cache misses forward to the Application Load Balancer inside the VPC

- ALB routes to healthy ECS Fargate tasks (no servers to manage)

- Fargate tasks query Aurora PostgreSQL — writer for writes, reader for reads

- Secrets Manager injects DB credentials at runtime (no hardcoded passwords)

- CloudWatch collects metrics and logs from every tier

What You'll Build

| Component | Service | Subnet Type |

| CDN + WAF | CloudFront + AWS WAF | Edge (global) |

| Load balancer | Application Load Balancer | Public (10.0.1/2.0/24) |

| Outbound routing | NAT Gateway × 2 | Public (one per AZ) |

| App containers | ECS Fargate | Private (10.0.11/12.0/24) |

| Database | Aurora PostgreSQL | Isolated (10.0.21/22.0/24) |

Prerequisites

- AWS CLI configured (

aws configure) - Docker installed locally for building container images

- An ECR repository for your container image

- A registered domain (optional, for custom CloudFront domain)

Step 1 — VPC and Networking

The VPC spans two Availability Zones with three subnet tiers: public, private app, and isolated DB.

Create the VPC:

aws ec2 create-vpc --cidr-block 10.0.0.0/16 \

--tag-specifications 'ResourceType=vpc,Tags=[{Key=Name,Value=my-app-vpc}]'Create 6 subnets (2 per tier across AZ-a and AZ-b):

# Public subnets (ALB + NAT Gateways)

aws ec2 create-subnet --vpc-id <vpc-id> --cidr-block 10.0.1.0/24 --availability-zone us-east-1a# Private subnets (ECS Fargate tasks) aws ec2 create-subnet --vpc-id <vpc-id> --cidr-block 10.0.11.0/24 --availability-zone us-east-1a aws ec2 create-subnet --vpc-id <vpc-id> --cidr-block 10.0.12.0/24 --availability-zone us-east-1b

# Isolated subnets (Aurora — no internet route at all)

aws ec2 create-subnet --vpc-id <vpc-id> --cidr-block 10.0.21.0/24 --availability-zone us-east-1a

aws ec2 create-subnet --vpc-id <vpc-id> --cidr-block 10.0.22.0/24 --availability-zone us-east-1b

`

Attach an Internet Gateway and add a route for public subnets:

aws ec2 create-internet-gateway# Public route table: 0.0.0.0/0 → IGW

aws ec2 create-route --route-table-id <public-rt-id> \

--destination-cidr-block 0.0.0.0/0 --gateway-id <igw-id>

`

Create a NAT Gateway in each public subnet (ECS needs outbound internet for ECR image pulls):

# Allocate Elastic IPs firstaws ec2 create-nat-gateway --subnet-id <public-subnet-a> --allocation-id <eip-a>

aws ec2 create-nat-gateway --subnet-id <public-subnet-b> --allocation-id <eip-b>

`

Point each private subnet's route table at its own NAT Gateway. This avoids cross-AZ NAT charges and ensures AZ-level resilience.

Best practice: Never use a single NAT Gateway. If that AZ goes down, all private-subnet outbound traffic fails.

Step 2 — Data Tier: Aurora PostgreSQL

Aurora is created bottom-up because the ECS task definition needs the DB endpoint.

Create a DB subnet group spanning both isolated subnets:

aws rds create-db-subnet-group \

--db-subnet-group-name aurora-subnet-group \

--db-subnet-group-description "Aurora isolated subnets" \

--subnet-ids <isolated-subnet-a> <isolated-subnet-b>Create the Aurora PostgreSQL cluster:

aws rds create-db-cluster \

--db-cluster-identifier my-aurora-cluster \

--engine aurora-postgresql \

--engine-version 15.4 \

--master-username dbadmin \

--manage-master-user-password \ # stores password in Secrets Manager automatically

--db-subnet-group-name aurora-subnet-group \

--vpc-security-group-ids <db-sg-id> \

--backup-retention-period 7 \

--storage-encrypted \

--deletion-protectionAdd two instances (writer in AZ-a, reader in AZ-b):

aws rds create-db-instance \

--db-instance-identifier my-aurora-writer \

--db-cluster-identifier my-aurora-cluster \

--db-instance-class db.t4g.medium \

--engine aurora-postgresql \aws rds create-db-instance \

--db-instance-identifier my-aurora-reader \

--db-cluster-identifier my-aurora-cluster \

--db-instance-class db.t4g.medium \

--engine aurora-postgresql \

--availability-zone us-east-1b

`

Security group for Aurora — only allow port 5432 from the ECS security group:

aws ec2 authorize-security-group-ingress \

--group-id <db-sg-id> \

--protocol tcp --port 5432 \

--source-group <ecs-sg-id>Best practices applied:

--manage-master-user-passwordstores credentials in Secrets Manager with auto-rotation--storage-encryptedenables KMS encryption at rest--deletion-protectionprevents accidental deletion- Reader instance in a separate AZ enables read scaling and failover in seconds

Step 3 — Application Tier: ECS Fargate

Create the ECS cluster:

aws ecs create-cluster \

--cluster-name my-app-cluster \

--settings name=containerInsights,value=enabledTask definition (save as task-def.json):

{

"family": "my-app-task",

"networkMode": "awsvpc",

"requiresCompatibilities": ["FARGATE"],

"cpu": "512",

"memory": "1024",

"executionRoleArn": "arn:aws:iam::<account>:role/ecsTaskExecutionRole",

"taskRoleArn": "arn:aws:iam::<account>:role/ecsTaskRole",

"containerDefinitions": [

{

"name": "app",

"image": "<account>.dkr.ecr.us-east-1.amazonaws.com/my-app:latest",

"portMappings": [{ "containerPort": 3000, "protocol": "tcp" }],

"secrets": [

{

"name": "DATABASE_URL",

"valueFrom": "arn:aws:secretsmanager:us-east-1:<account>:secret:my-aurora-cluster"

}

],

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-group": "/ecs/my-app",

"awslogs-region": "us-east-1",

"awslogs-stream-prefix": "ecs"

}

}

}

]

}aws ecs register-task-definition --cli-input-json file://task-def.jsonCreate the ECS service with tasks spread across both AZs:

aws ecs create-service \

--cluster my-app-cluster \

--service-name my-app-service \

--task-definition my-app-task \

--desired-count 2 \

--launch-type FARGATE \

--network-configuration "awsvpcConfiguration={

subnets=[<private-subnet-a>,<private-subnet-b>],

securityGroups=[<ecs-sg-id>],

assignPublicIp=DISABLED

}" \

--load-balancers "targetGroupArn=<tg-arn>,containerName=app,containerPort=3000"Auto Scaling — add CPU-based target tracking:

aws application-autoscaling register-scalable-target \

--service-namespace ecs \

--resource-id service/my-app-cluster/my-app-service \

--scalable-dimension ecs:service:DesiredCount \aws application-autoscaling put-scaling-policy \

--policy-name cpu-tracking \

--service-namespace ecs \

--resource-id service/my-app-cluster/my-app-service \

--scalable-dimension ecs:service:DesiredCount \

--policy-type TargetTrackingScaling \

--target-tracking-scaling-policy-configuration \

"TargetValue=60,PredefinedMetricSpecification={PredefinedMetricType=ECSServiceAverageCPUUtilization}"

`

Best practices applied:

networkMode: awsvpc— each task gets its own ENI and security group (best for isolation)assignPublicIp=DISABLED— tasks are unreachable from the internet directly- Secrets Manager reference in task def — credentials are never in environment variables or code

containerInsights=enabled— detailed CPU/memory metrics per task- Minimum 2 tasks ensures one survives an AZ failure

Step 4 — Presentation Tier: ALB, CloudFront, WAF

Create the Application Load Balancer in both public subnets:

aws elbv2 create-load-balancer \

--name my-app-alb \

--subnets <public-subnet-a> <public-subnet-b> \

--security-groups <alb-sg-id> \

--scheme internet-facing \

--type applicationCreate a target group for ECS tasks (IP mode required for Fargate):

aws elbv2 create-target-group \

--name my-app-tg \

--protocol HTTP --port 3000 \

--vpc-id <vpc-id> \

--target-type ip \

--health-check-path /health \

--health-check-interval-seconds 30Add HTTPS listener (requires an ACM certificate):

aws elbv2 create-listener \

--load-balancer-arn <alb-arn> \

--protocol HTTPS --port 443 \

--certificates CertificateArn=<acm-cert-arn> \

--default-actions Type=forward,TargetGroupArn=<tg-arn>Create CloudFront distribution pointing at the ALB:

aws cloudfront create-distribution --distribution-config '{

"Origins": {

"Items": [{

"Id": "alb-origin",

"DomainName": "<alb-dns-name>",

"CustomOriginConfig": {

"HTTPSPort": 443,

"OriginProtocolPolicy": "https-only",

"OriginSSLProtocols": { "Items": ["TLSv1.2"], "Quantity": 1 }

}

}],

"Quantity": 1

},

"DefaultCacheBehavior": {

"TargetOriginId": "alb-origin",

"ViewerProtocolPolicy": "redirect-to-https",

"CachePolicyId": "4135ea2d-6df8-44a3-9df3-4b5a84be39ad",

"OriginRequestPolicyId": "b689b0a8-53d0-40ab-baf2-68738e2966ac"

},

"Enabled": true,

"WebACLId": "<waf-acl-arn>"

}'ALB security group — restrict inbound to CloudFront managed prefix list only:

# Get CloudFront prefix list ID

aws ec2 describe-managed-prefix-lists \aws ec2 authorize-security-group-ingress \

--group-id <alb-sg-id> \

--protocol tcp --port 443 \

--prefix-list-id <cloudfront-prefix-list-id>

`

This ensures the ALB only accepts traffic from CloudFront — direct access to the ALB URL is blocked.

Best practices applied:

- HTTPS-only from CloudFront to ALB (

OriginProtocolPolicy: https-only) - CloudFront prefix list on ALB SG — blocks direct ALB access, forcing all traffic through WAF

redirect-to-httpsviewer policy — no plain HTTP reaches your app- WAF attached at CloudFront level — filters before traffic enters the VPC

Security Group Rules Summary

| Security Group | Inbound | From |

| ALB SG | 443 TCP | CloudFront prefix list |

| ECS SG | 3000 TCP | ALB SG |

| Aurora SG | 5432 TCP | ECS SG |

This chain ensures each tier only accepts traffic from the tier directly above it. Aurora is completely unreachable from the internet.

Monitoring and Observability

Enable these with zero-code changes:

- VPC Flow Logs — captures all network traffic for audit and debugging

- CloudWatch Container Insights — CPU, memory, network per ECS task (enabled at cluster creation)

- ALB Access Logs — request-level logs to S3 with latency, status codes, target IDs

- Aurora Performance Insights — query-level DB performance (enable in the cluster settings)

- CloudWatch Alarms — alert on

ECSServiceAverageCPUUtilization > 80%,DatabaseConnections > 80%,TargetResponseTime > 1s

# Enable VPC Flow Logs

aws ec2 create-flow-logs \

--resource-type VPC --resource-ids <vpc-id> \

--traffic-type ALL \

--log-destination-type cloud-watch-logs \

--log-group-name /aws/vpc/flowlogs \

--deliver-logs-permission-arn <flow-logs-role-arn>Cost Optimisation Tips

- NAT Gateway is the biggest surprise bill. Use VPC endpoints for ECR, S3, and Secrets Manager to eliminate NAT traffic for those services — they're free for Gateway endpoints.

- Fargate Spot can cut compute costs 70% for non-critical workloads. Mix On-Demand (minimum 2) with Spot for scale-out tasks.

- Aurora Serverless v2 instead of provisioned instances scales to zero during off-peak hours — ideal if you have variable traffic.

- CloudFront caching reduces ALB and ECS invocations. Set appropriate

Cache-Controlheaders on static responses.

Quick Reference

| What | Command / Location |

| DB endpoint | `aws rds describe-db-clusters --query 'DBClusters[].Endpoint'` |

| DB credentials | AWS Secrets Manager → `my-aurora-cluster` |

| Container logs | CloudWatch Logs → `/ecs/my-app` |

| ECS service status | `aws ecs describe-services --cluster my-app-cluster --services my-app-service` |

| Scale manually | `aws ecs update-service --cluster my-app-cluster --service my-app-service --desired-count 4` |

| Deploy new image | Update task definition revision → `aws ecs update-service ... --task-definition my-app-task:2` |

Summary

The stack follows AWS Well-Architected Framework principles across all five pillars:

- Security — each tier isolated by security groups, secrets in Secrets Manager, WAF at the edge, encryption at rest and in transit

- Reliability — multi-AZ across all three tiers, ALB health checks, ECS auto-recovery, Aurora automatic failover

- Performance — CloudFront caches at the edge, ALB load-balances across tasks, Aurora reader handles read traffic

- Cost — Fargate eliminates idle EC2 capacity, pay per task-second; Aurora scales storage automatically

- Operations — Container Insights, Flow Logs, and Performance Insights give full-stack visibility with minimal setup